Can AI Prompt Us to Ask New Questions?

Not with chatbots as we know them, but perhaps with what comes next

by Dan Cohen

[This is the second piece in a series on finding the right line between human thought and AI assistance, focused on the stages of scholarly work from initial ideas through research and analysis to publication, although I believe much of this discussion is applicable to intellectual work beyond the academy. The miniseries began with this introduction. In this issue, the genesis of an exciting research idea and whether AI can help to ignite that spark.]

A year ago, with tongue firmly in cheek, I took one of the difficult comprehensive exams that are being used to grade the intelligence of AI models, just to see how I’d measure up. Promptly, and embarrassingly for a history professor, I failed the history section of that exam. (Too many questions about naval battles.) The best LLMs had already surpassed me, and now, a year later, the latest models are roughly three times as able as they were a year ago on that same exam, still far from perfect but capable of producing accurate answers to very tough questions from the full range of academic disciplines.

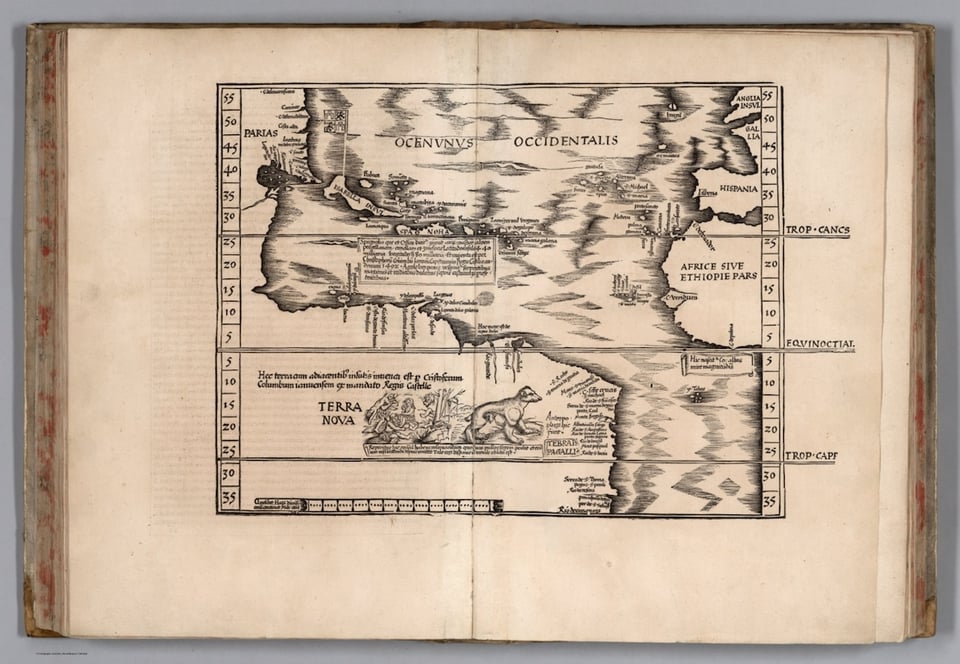

My point in that piece, however, was right there in the title: “Asking Good Questions Is Harder Than Giving Great Answers.” If we are going to grade the “intelligence” of AI based on its ability to answer PhD-level questions, we are missing a critical point about PhD-level work: In advanced research what we value the most is not the ability to crank out airtight answers, but the ability to formulate distinctive, uncommon, and often open-ended questions. This terra nova is the fertile ground on which new ideas and interpretations bloom.

A case in point: “How Long Is the Coast of Britain?” If you are unfamiliar with this question, it seems worthy of no more than a quick Google search. But if you are familiar with this question, you know that it gave rise to an entirely new field in mathematics. It’s the title of a delightful little 1967 study by Benoît Mandelbrot. Mandelbrot noticed that if you zoom in on a map, a small section of coastline has the same squiggly shape as a larger section, and that’s true for an even smaller section as well. The jaggedness repeats and repeats, down to the level of tiny inlets. This greatly complicates the process of measuring the coast. Prior estimates essentially used a ruler of a specific size, laid end to end over the zigs and zags, to approximate an overall length. However, that is not really an honest accounting, Mandelbrot realized, because a ruler of a different size will give you a rather different measurement, as it will touch the coastline in more or fewer places. In fact, these measurements might be off by orders of magnitude. A curious result! A seemingly simple question had led Mandelbrot to imagine a new kind of geometry:

Seacoast shapes are examples of highly involved curves such that each of their portion can — in a statistical sense — be considered a reduced-scale image of the whole. This property will be referred to as “statistical self-similarity.” To speak of the length for such figures is usually meaningless…’the left bank of the Vistula, when measured with increased precision, would furnish lengths ten, hundred or even thousand times as great as the length read off the school map.’

Through his potent thought experiment, Mandelbrot developed a novel category of shapes that are somehow compact and yet infinite; from this flowed fractal geometry and its offshoots in contemporary mathematics.

* * *

So the open-ended question I pose here is: Instead of answering our prompts, can AI prompt us to conceive illuminating new research questions? In its current popular forms, from LLM chatbots to discipline-specific machine-learning AI, it seems poorly designed to do that. Structurally and interface-wise, Claude, ChatGPT, and Gemini are mostly answer-machines for our questions right now. Yes, they can ask us questions in response to our prompts, reversing the polarity briefly, but they are designed to be on the A side of Q&A. We prompt them.

Highly specialized machine learning/artificial intelligence tools, trained on enormous data sets and running on warehouse-scale computers, are also constructed to seek answers, generally for more complex problems than the chatbots. Using these tools, researchers are able to navigate incredibly large problem spaces with greater ease and far greater speed. For instance, Google DeepMind’s AlphaFold can run through a vast number of ways proteins might be assembled and interact. But that problem space, while gigantic — in the case of AlphaFold, handling over 200 million protein structure predictions — is ultimately bounded, well-defined, and operating according to known rules in molecular biophysics and biochemistry.

Similarly, there has been considerable recent news about AI solving previously unsolved problems in mathematics. A good way to get beyond the superficial coverage of this development is to track leading mathematician Terence Tao’s GitHub page, which carefully determines exactly what AI contributed to each mathematical solution, and how well it did. It’s a mixed bag right now, despite major advances, but for our purposes, it is worth underlining that these open math questions had already been developed by human beings, or mostly a human being, since a preposterously large number of them came from the brain of Paul Erdős.

In short, AI progress in some areas of research is impressive but nevertheless part of what the historian and philosopher of science Thomas Kuhn called “normal science,” the important work of filling out existing models and answering lingering questions through careful experiments and studies — “puzzle-solving” within the existing conceptual frameworks of a discipline. (Note that AI as “normal science” is different from, but not entirely unrelated to, AI as “normal technology,” a parallel critique suggesting that AI, while an extremely powerful tool, will be slowly integrated into modes of knowledge work we already understand, rather than revolutionizing them.) The rapid acceleration, but overall normality, of AI-assisted research was recently confirmed by a large study in Nature, “Artificial intelligence tools expand scientists’ impact but contract science’s focus,” by Qianyue Hao, Fengli Xu, Yong Li, and James Evans:

[The] adoption of AI in science presents what seems to be a paradox: an expansion of individual scientists’ impact but a contraction in collective science’s reach, as AI-augmented work moves collectively towards areas richest in data. With reduced follow-on engagement, AI tools seem to automate established fields rather than explore new ones, highlighting a tension between personal advancement and collective scientific progress.

AlphaFold is not going to pause one day, blank the screen of a startled biochemist, and ask pointed questions in a green phosphor font about the nature of proteins, which still have many strange and poorly understood functions. If we had had powerful LLMs in 1966 rather than 2026, and had trained them on all of the existing books and articles that predated that year (the Beatles’ "Tomorrow Never Knows" playing in the background as the GPUs hummed), the resulting AI would not be curious about the length of the coast of Britain.

* * *

Kuhn’s famous answer to where new questions and frameworks come from is the repeated human encounter with anomalies, bits of data or new sources that don’t align well with our existing knowledge and theories. Eventually the cognitive dissonance and friction build until they spark challenging new questions. If that’s the case, can we design AI systems to help highlight the unusual, the understudied, the largely invisible, which might prompt human minds to consider unexpected ideas?

In my discussion last year of AI-assisted library interfaces, I was blind to this possibility, focusing instead on using LLMs to surface even better matches for what a researcher was already seeking. But it might be worth surprising scholars from time to time rather than always giving them perfect alignment. This surprise could take the form of a sidebar of items that wouldn't show up in a standard Boolean search. Or a tool could scan all of the relevant material and return an assessment of what hasn’t been studied in this particular area. This would be the opposite of AI’s current inclination toward summarization and constant narrowing. Now that it’s trivial to create small AI agents, a library could also provide a service to notify a researcher when new items are digitized or accessioned that may be relevant to their work. All of these services could take advantage of the much larger context windows — the length of input text — that today's LLMs can handle. For example, it would be useful to have a scholar upload a research brief, rather than typing in mere keywords, when beginning a search and discovery process.

In addition to repeated encounters with the unusual, new ideas can also come from open-ended noodling with familiar resources and data. In “AI Agents for Economics Research,” Anton Korinek describes a healthy division of labor between the human mind and AI agents that maintains the former’s primacy: “While these systems compile existing knowledge rather than generating novel insights, they speed up an important part of the research process by automating the time-intensive work of information gathering and initial synthesis.” Consider an idea; ask the agent to assemble the relevant data to see if there’s anything to it; revise and repeat as the inquiry develops. The historian Benjamin Breen has used AI coding tools to rapidly build interactive environments that combine cultural heritage collections and LLMs so that he and other scholars can immerse themselves an era or set of documents in a free-form, thought-provoking way. The “Premodern Concordance” Breen created with fellow intellectual historian Mackenzie Cooley, a prototype of an exploratory website that covers natural history books from García de Orta to William James, helps the prospecting researcher discover latent connections across texts written in different times and languages. This kind of digital project would have previously taken years of effort at a digital humanities center; with tools like Claude Code, one can imagine quickly producing such a vibrant early-stage research tool for a single dissertation or article.

We may very well look back on our first years of engagement with AI in forms like ChatGPT as we now look back on AOL before the flourishing of the World Wide Web — a comfortable, contained, and relatively inflexible introduction to a new digital world. The flexibility and expansiveness of the Web — the fact that anyone could assemble sites and services from servers, collections, and data from around the world — made it a much better environment for the generation of new ideas.

We should also remember that human beings still have plenty of ways to conceive new ideas that don’t use AI, or any technology at all. Benoît Mandelbrot was obsessed with maps from a young age, developing insights into them that would elude most other people, and it is clear from his biography that his years in IBM's highly interactive, interdisciplinary research group prompted him in directions he could not have foreseen. We human beings can find ourselves thinking new things simply by having coffee with another person, or by reading a good book they wrote.